"We should forget about small efficiencies, say about 97% of the time: premature optimization is the root of all evil." -Donald Knuth

Good, practical, applied science is most often a feedback loop for taking the best results, sometimes mixing them a bit, and repeating them. In fact, this has been algorithmically codified in artificial intelligence through genetic algorithms.

During the pandemic, the anonymous [great] minds behind the cluster of research aggregation sites including c19early.com have been creative and heroic. So, understand here that as I disagree with a chart made there, I do so with the greatest respect for their accomplishments.

But I'm here to disagree with one of their charts—or at least to suggest that it's misleading relative to the primary point that needs to be made. However, this is really good news, not bad news. In fact, it's not just that it's really good news that I write about it, but that it's really good news that I write about it.

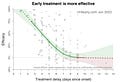

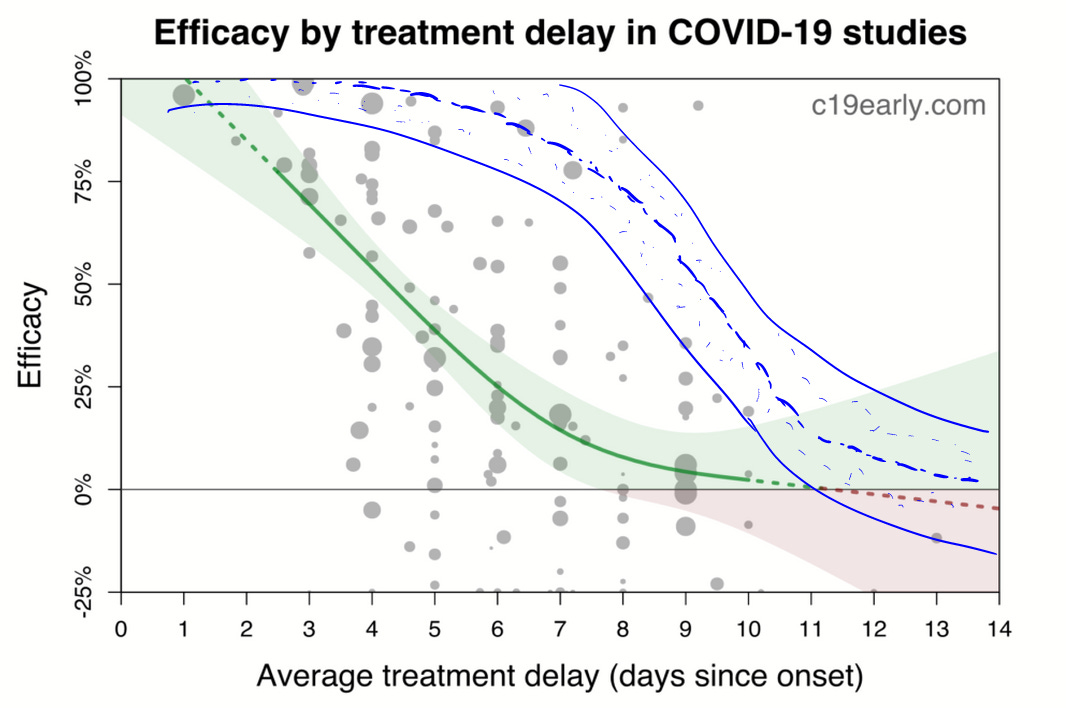

The chart in question is the result of a mixed-effects model analysis of all the various treatments collected there in studies:

This chart has made the rounds on the internet. That's more a good thing than a bad thing, I suppose, but the chart makes early treatment medicine look less effective than in reality. I will explain…

A (Clearly) Better Framework for Analysis

In one of my first substack essays, where I critiqued the religious relevance of the Randomized Control Trial (RCT), I made a crucial point that needs to be revisited.

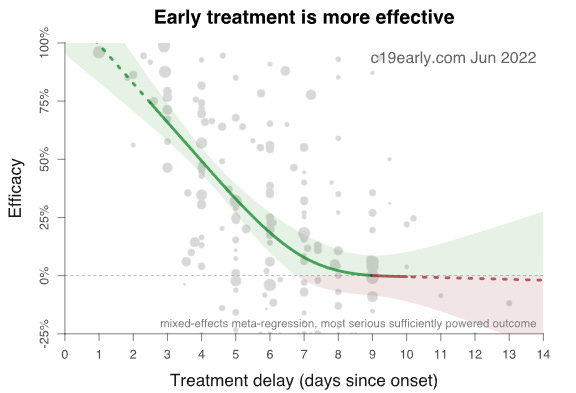

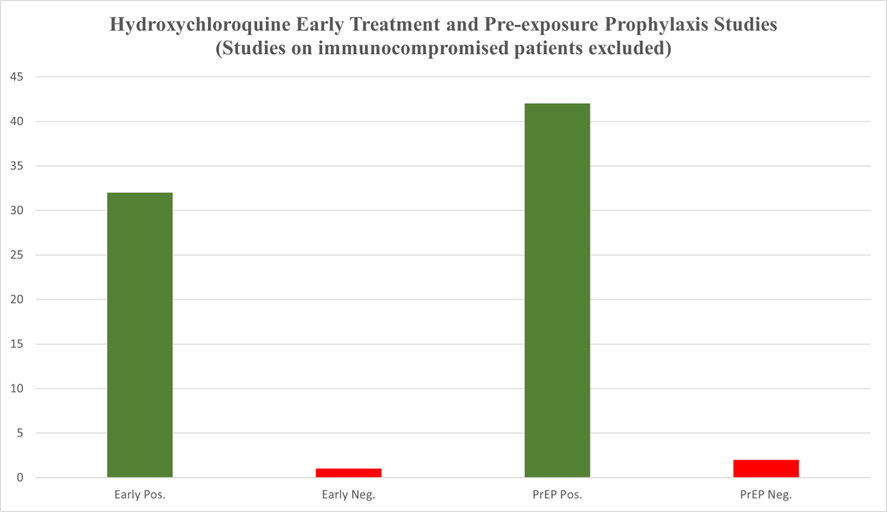

While looking at two charts from March 2021 at HCQmeta.com (one among the family of sites feeding into c19early.com), it occurred to me that if I were building protocols to treat patients, much of the data that follows would not matter for that purpose. When seeking optimization, we ditch the lesser protocols and repeat the better ones (and despite the way Biostatisticians make inappropriate use of p-values, they were intended to be used to gauge and refine results of repeated experiments).

I made the logical point regarding time to treatment in protocols:

However, it makes the most sense to focus our primary attention on the most reasonable treatment protocol, which takes into account that SARS-CoV-2 replication largely ends after the first few days of symptoms. This prioritizes early treatment studies.

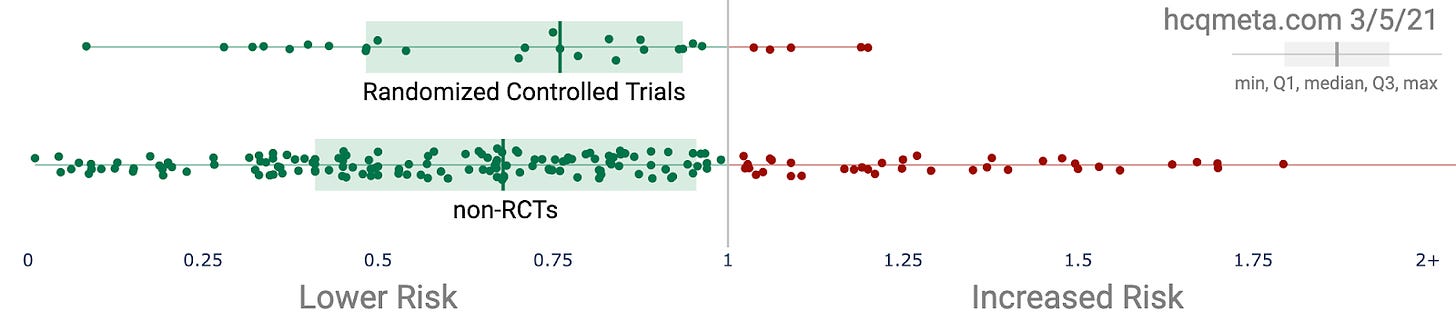

The reason that we should cast aside most of this information for the purpose of crafting a protocol is that a protocol is not a mixed strategy. While I love game theory, protocol writing is solving an optimization problem. Thus, for each treatment, such as HCQ, we should identify those studies in the near orbit of some coherent patterns according to several relevant axes. For instance, we should cast aside the mangled and misleading WHO trials, because the protocols could not be further from optimal protocols. Also, in the most pure kind of academic speak, duh.

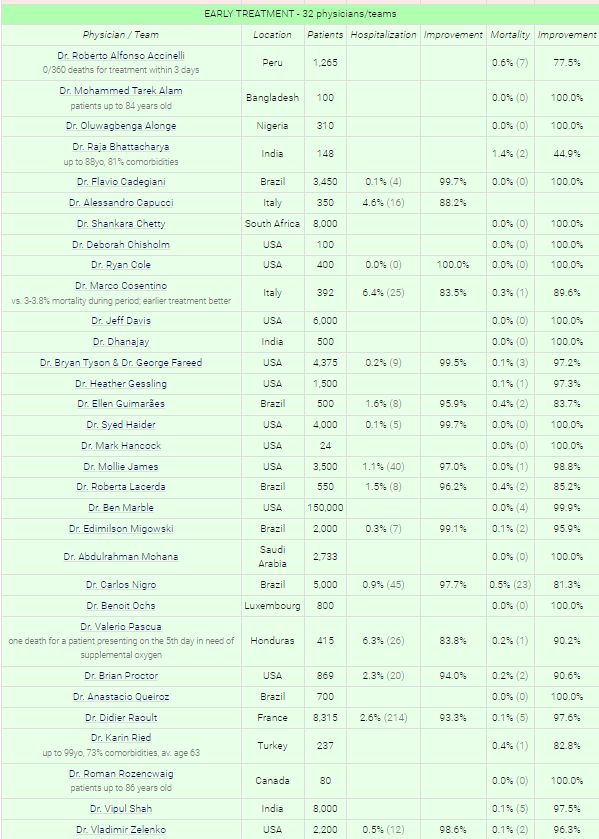

Logically, it makes the most sense to hone in on those studies that tend toward optimal protocols. Sadly, much of the best evidence for HCQ efficacy comes not from prospective studies, but from physicians working in the field, using the medicine that was expected to be the most likely successful treatment for a SARS-CoV for years prior to the pandemic. This is sad not because the data is irrelevant, but because it gets systematically and incorrectly undervalued by the biomedical community that many look to with excessive proxy trust.

Source: hcqmeta.com

This chart leaves out additional data from Dr. Peter McCullough, Dr. Stella Immanuel, Dr. John Littell, Dr. Pierre Kory/FLCCC, Dr. Kimberly Milhoan, Dr. Katarina Lindley, Dr. Mary Talley Bowden, Dr. Deborah Chisholm, Dr. Luigi Cavanna, and numerous others. And the overall survival rate is greater than 99.97%, as I noted with a largely overlapping pool of results in Overcoming the COVID Darkness. Such a survival rate is in the ballpark of background survival rates, so we might as well call the "use antivirals, vitamins, zinc, and the kitchen sink (based on symptoms)" curative.

During ordinary times, the larger Statistics community correctly points out that the biomedical statistics community is largely a compromised pile of incomprehensible trash that has been allowed to pile up now for decades. Any community that tolerates meta-analyses (Axfors et al, 2021) that make invisible most of the practical data above, as well as most of the studies at hcqmeta.com (particularly the ones that use optimal protocols), while failing to separate analysis according to (1) time to treatment, (2) dosage, and (3) conjunctive therapy, truly is epistemological trash. So too are many of the hospital studies that inherently build Simpson's paradoxes into the results (which likely happens in trials like the WHO ran where the protocols were distributed to dozens of nations where it was likely applied differently and to different populations).

Where is the feedback analysis on best protocols?!

(As of mid-December, 2021)

Where in the Biostats community, there are 90/10 opinions supporting most of the methods they use, that ratio traditionally fully flips when the far more numerous and rigorous Statistics community [that wasn't trained in Pharma schools] chimed in, historically speaking. However, at the outset of the pandemic, the broader part of the Mathematics community (not every individual, but enough that it matters) threw its head in the sand. Sadly, they are now largely a combination of bought, threatened, or wholly lacking in courage. When they do speak up, they are silenced—which can only take place when too few of them speak up.

So, here we are.

Now, let's consider what the first chart above should really look like, were it aimed at the primary goal of protocol optimization.

In blue I've redrawn the efficacy to roughly pattern the results of the best protocols. Note that my chart, crudely but obviously redrawn through optimal points is consistent with,

The results of the practicing physicians above.

The rate of decline in efficacy according to time to treatment protocol separation in the Tyson/Fareed analysis.

The (obvious) generality that antiviral medications are best used ASAP.

The known fact that viral loads begin to drop after around a week, and the disease progression becomes largely a matter of inflammation (cytokine storming) and other damage (circulatory system) done by the spike protein.

Can anyone give me a good reason why we should not expect reality to match my redrawn graph?

Good applied science veers widely from the application of stupid methods, even if we label those methods with fancy phrases that inappropriately coopt language to falsely imply auto-rigor, such as the textbook methods masquerading as "Evidence Based Medicine". That's not to say those methods don't have their places, which are sometimes in ideal circumstances that almost never exist. Standing above those methods must be a combination of first principles driven by basic logic. Absent that, the methods become the boundaries of a game that the Pharmafia has spent decades sculpting—for some moment like this when they could be used to engineer a blatantly false reality.

Thank you, thank you for this critical look at early treatments. If AI was used for treatment decision making, it is hard to imagine the advice from AI would be to stay home til your lips turn blue, then come into hospital where you will receive remdesivir and mechanical ventilation. Boy, have the medical community f’d up.

B"H" I dedicate this comment to the memory of Zev ben Aaron

re RCT fetishism (and specifically HCQ) see April / May 2020 from all places Harvard

Unleash the Data on COVID-19 Maryaline Catillon and Richard Zeckhauser

https://web.archive.org/web/20200505112045/https://twitter.com/daniel_bilar/status/1255193716459003904

""RCTs [..] only ethically acceptable when the safety & perf of a treatment is unknown. When ample data exists, as now, that criterion is not met"

"Analyzing real world data on actual outcomes [..] offers an alternative approach to learn almost immediately"

"High quality case control studies based on 1000s of cases [..] are immensely faster than RCTs"