"You can prove anything you want by coldly logical reason—if you pick the proper postulates." -Isaac Asimov, I, Robot

There is a quick and simple analogy to data science. If you get to engineer the data stream, you control the beyond-damned lies inferred.

Friends of mine from childhood and friends of mine recently might describe me as two different people. When I was younger, I was more likely to make mistakes with facts. At times, it would have been fair to call me "sloppy" with research, and that led to frustrating mistakes.

I learned. Not without failures and pains, but I learned.

Friends of mine from recent years know me as an epic note taker. That came about as a combination of a desire to organize more and better details than I can arrange in my head at one time, a desire to keep track of citations that I did not always bother to memorize or even note, and a desire to overcome my own personal defects (dyslexia).

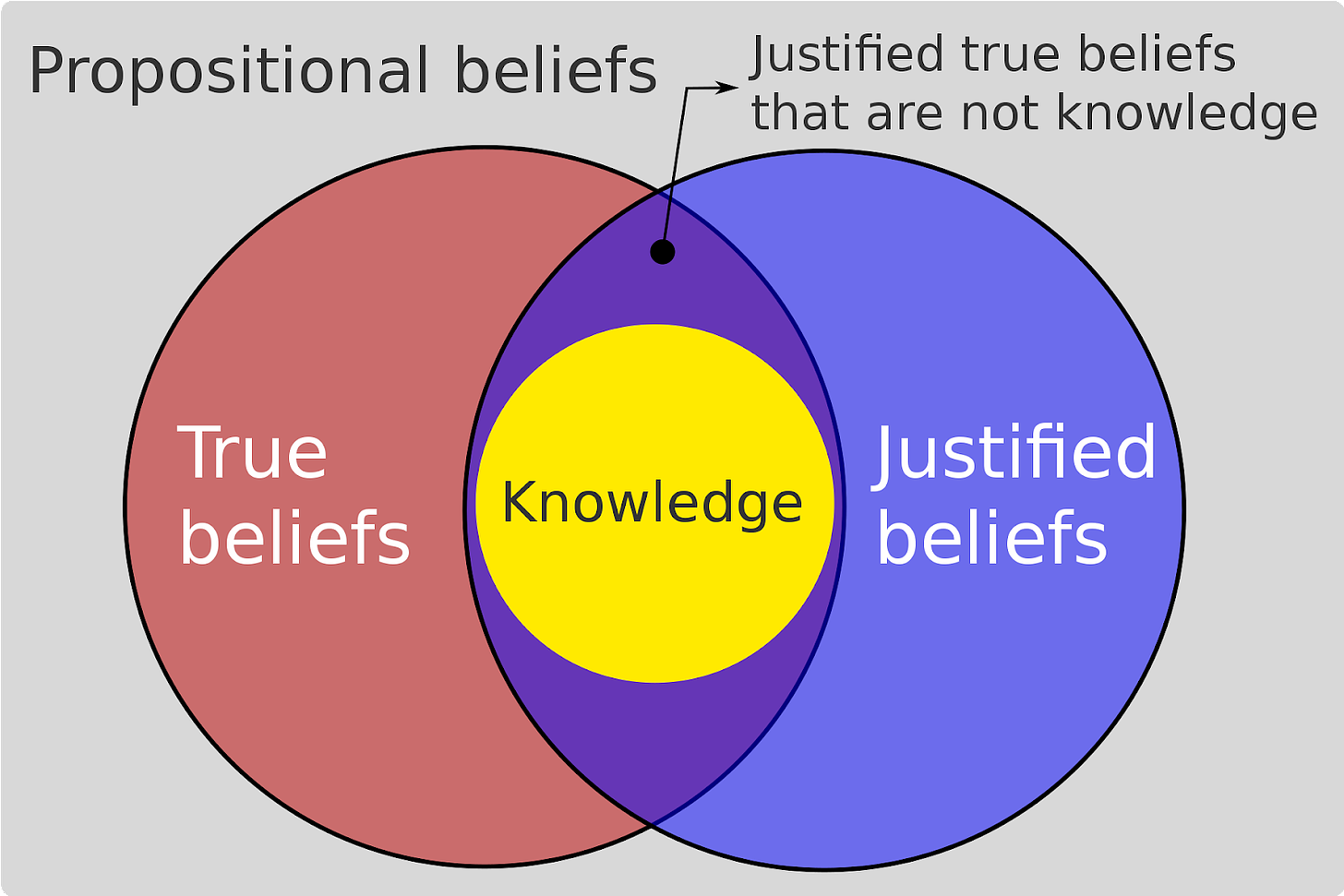

Source: Dominic Mayers via CC BY-SA 4.0

Practicing Good Epistemology

Last April when I worked on the data from Dr. Brian Tyson's clinics (along with Dr. George Fareed), I wanted to go the extra mile in terms of data epistemology. I didn't have a budget to fly to California, then track down patients and quiz them about the medication they took. However, I looked for the right way to feel comfortable [in neutral skin—my way of saying I meditated myself into a mindset of questioning my belief in the result] that I understood the nature and veracity of the data.

Brian answered my query by putting me on the phone with the Imperial County epidemiologist who stated that the state of California had documented the All Valley Urgent Care (AVUC) clinical data. I could have left it at that, but after that phone call, I did three things:

I researched the county epidemiologist.

I called her back and talked to her without Brian on the phone.

I wrote her an email so that in response I would have (and did obtain) a written statement of the documentation of the AVUC data.

I didn't even mention to Brian that I was doing all that until later. It wasn't that I didn't trust him, but that I wanted for any third party observer to understand that I did my due diligence in establishing a reason for that trust that went beyond confirmation bias. I'm not even sure that I fully mentioned taking these steps to him until our mutual friend Joe interviewed us a few weeks later:

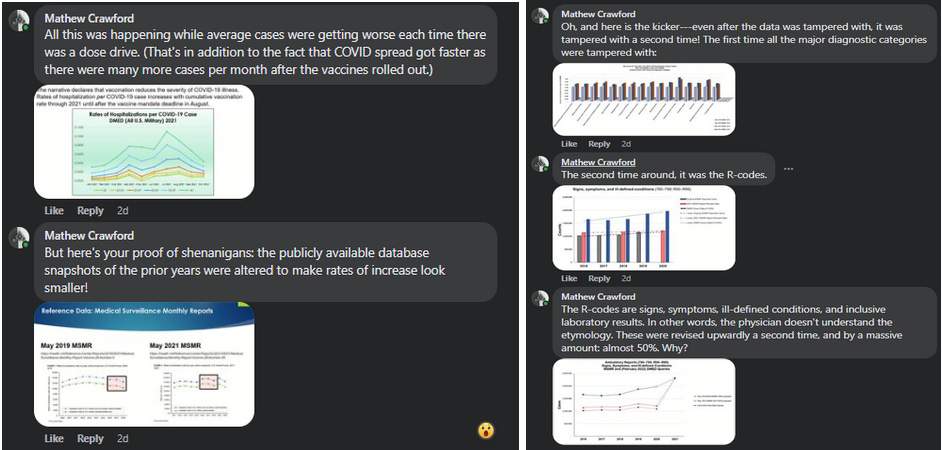

Later, when I was asked to work on the Defense Medical Epidemiological Database (DMED) issue, I chose not to take any data presented without tracking down sources as best as possible. This led to the discovery of the DMED snapshots published in the Medical Surveillance Monthly Reports (MSMR) found publicly available at health.mil. In turn, that led to the discovery that somebody tampered with the database (for 2016-2019 certainly, but likely 2020 in one fell swoop). While my findings changed the story of the whistleblowers, it justified their intuition and action.

That's doing [data] science.

Let's be clear: good epistemology comes at a price. I do not expect everyone not engaged in serious projects to take these steps. But that is to say that not taking these steps means you're not seriously engaged in a project.

I want to phrase that one more time:

If you're not verifying the data, you're not doing serious statistics, yet.

That's not to say that I expect every use of statistics to involve deep dives or data sourcing. As with all other time investments, there are economic equilibria. Each and every one of us takes some data for granted at times based on knowledge frameworks and proxy trust in networks of knowledge workers. I certainly do! And like most everyone else, I'm usually correct while sometimes suffering being wrong and changing my mind later. That's the way principles and heuristics work—good, but imperfectly, but by perceived time and resource economics in decision-making.

But when issues get serious—when the economic outcomes grow in importance—that's when serious people should take serious steps.

Vaxsplaining From a Data Scientist

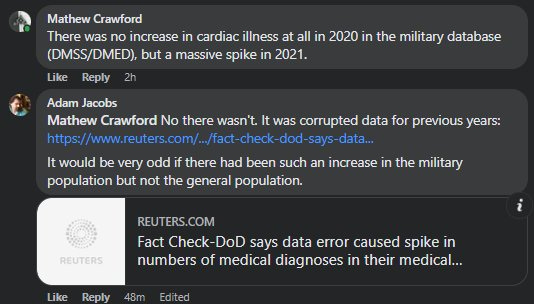

Oh boy, here we go. This was on a thread where Adam Jacobs, a Chief Scientist at a data company, declared that there was no evidence he could find that there was an increase in cardiac mortality among young people.

Can't…stop…eyes…rolling…

Adam is also apparently employed at Weill Cornell Medical. I followed by sharing with him my articles about the DMED, and a number of my graphs and slides. I'll save you most of the boring details.

So, what does a Chief [data] Scientist with Ivy League medical institution ties do when presented with work (data verification and deep dive) that shows negative vaccine efficacy in the military, a manipulated database, increases in cardiac illness associated with vaccine rollout, and an apparent attempt to hide further injury and illness in the R-codes? Does he thank the person who performed the tedious, but necessary task of data verification, painstakingly laying out the knowns and known unknowns? Perhaps engage in a thoughtful conversation to reconstruct the details and measure lost QALYs and WELBYs, whether actualized or not? Maybe bring in the troublesome insurance data that would concern anyone paying an ounce of attention?

Perhaps wonder if he should dig further into the nature of mortality statistics he says he got from the CDC?

No. He moves on to trying to score points about a single statistic…for which he has also not done the legwork to verify.

After a little more discussion, including observations of increases in cardiac arrest reported by other nations, I gave up. There's only so much time you can spend on people whose interest seems to be in finding the one point you don't have an answer for or that they can feel like they're winning while ignoring the carnage around them. After his "loony" comment, I was spending the time not for him, but for others who would be reading the discussion.

Also, it seemed likely that he didn't bother to read the DMED articles. At least, he wouldn't answer whether he had or not.

This is a "well educated" data professional in a "respectable position" of authority. This is what we're facing every day. It's like we've been trying to warn everyone since 30 minutes before this video, and we're 14 seconds in.

Serious question: What can be done to wake people up who are this bent on dismissing the glitch in the Matrix? Is this something like total commitment to the charade?

-One of my friends from Peru, now about 25 years old. Stable Fibroadenoma for many years. Never increased in size since it was diagnosed several years ago.

-She took three vaccines (although she did not really want to) in order to be able to participate in social life.

-Guess what happened.

-The following routine medical examination showed a 50% increase in the size of the Fibroadenoma although during all the previous years it had never grown.

-The doctor is mystified.

-My friend wonders if it could have something to do with the vaccines and asked me. She mentions that there could, theoretically, also be other reasons.

-I agree with her that other reasons may always be possible but told her that there is a high likelihood that it may have (also) been the vaccine due to the temporal association and because of the biological plausibility, because there are biological explanations for it and because many other people have the same problem following vaccination (many doctors see an increase of cancer in vaccinated people).

So I sent the paper from Seneff et al. in which these top scientists explain that the vaccines lead to massive harm of the immune system and cause "widespread dysregulation of oncogene controls, cell cycle regulation, and apoptosis"

So in other words: You have intelligent tumor control systems in your body. Then you take the vaccine and the intelligent system gets dysregulated. Great idea!!

-My friend suspects that the vaccine may be responsible. Will she report it? I do not think so. I think she does not even know where to report possible adverse events.

-Will the doctor care or mention or admit that it may be the vaccine? No. Will the doctor report the case? Never.

-My friend said Peru just started the 2. booster. Will she take the 4. vaccine? I do not know but I would not exclude it, she wants to continue her social life.

-Will the doctor tell her to take the next vaccine? Yes, why not??

Now my question for you Mathew. Why do people accept being severely harmed or murdered??? Why does my friend not visit the doctor, present the paper from Seneff and ask the doctor to stop promoting the vaccine (and instead, start promoting early treatment within the first 3 days)??

-Why are humans like that?

In this case I think his is a case of “men don’t see what their salaries depend on their not seeing”. In my experience many bring a debunkers mentality to it: they look for one thing that allows them to dismiss the whole, massive, case against the vax and focus only on that.