"I wanted to explain that trusting is harder than being trusted." -Simon Van Booy

A word of encouragement: don't worry if you get lost in the technical jargon at first. Sometimes that jargon sinks in after getting your hands a little dirty, and that opportunity is provided below.

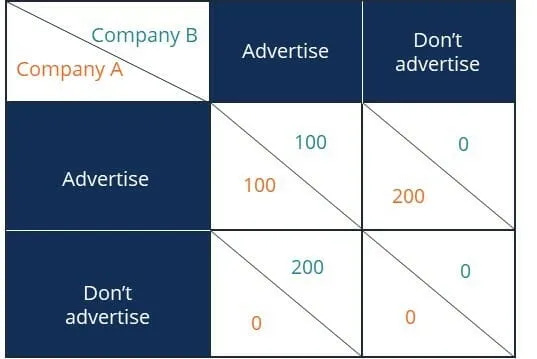

There is no one perfect model for human relationships. However, game theory at least models some basic economics of trust in terms of utility garnered from various decisions. These models come in two forms: normal form games and extensive form games. In this article, I plan to discuss a particularly challenging normal form game circumstance known as the prisoner's dilemma (see here, here, and here). An enormous portion of the world's problems—particularly the most challenging economic and political problems—are essentially prisoner's dilemma (PD) problems, or have versions of the problem embedded in them.

At some point in the future, I plan to write my own basic prisoner's dilemma article, for I'll let the links and video above suffice for now. The prisoner's dilemma is unique among basic normal form games because each player's rational decision is to defect, which has a lower overall "payout" than if each player cooperates. In technical terms, we say that the Nash equilibrium is for all players to defect. (Edit: for clarity, think of the example below in terms of a system in which everyone needs the service provided by Company’s A and B, so each customer converted comes from the other company’s existing customer base.)

Iterated Games are Different

While the prisoner's dilemma seems like bleak frustration, the game changes when participants repeat the game. We call such a series of games iterated prisoner's dilemmas (iPD's). In such games, it can be best to cooperate in order to maximize individual utility. Since judgment of "personality" comes into play, rationality takes a backseat to pattern recognition and pattern projection.

A few years ago, Nicky Case built a brilliant game that demonstrates the point in a relatively simple way. I encourage readers to play the game through, and then to consider the commentary about the various personalities they play against.

If you're game, you might then watch this video about Professor Robert Axelrod's strategic tournaments that used an iPD format.

There is still debate about "best strategies" in Axelrod tournaments, but it is clear that responsive strategies that are not doormats (always cooperative even against players who defect aggressively) or overly aggressive (defecting often to try to achieve short term advantages that might or might not be held long term) achieve better results (though the selfish, overly aggressive strategies dominate the passive doormats).

While this much study of PDs and iPDs is certainly quite educational, there is an interesting question of how best strategies change when the Axelrod tournament structure changes. What happens if the payouts change to make risks of cooperation more or less shallow? What happens when all players observe all games versus when only players see their own games? What happens when the payout structure changes, or the lengths of the game vary (possibly at random)?

These are among problems I've studied for a number of years, and at some point I'll write more about them—or perhaps team up with a computer scientist to study. I believe that the answers these variations of Axelrod's tournament produces will absolutely result in better models for economic and political decision making.

Final Question

What happens in iPDs in which participants are told that "winning" the most binary games (rather than total points accumulated, or vice versa) might result in immortality?

Is that what the plandemonium is about—at least to some degree?

Thanks, Mathew. The Nicky Case vid made my head spin a bit, but fascinating that different numbers of iterations using essentially the same variables produce different outcomes (if I got it right). Truth is stranger than fiction.

You're the first person besides myself that I've ever seen promote or talk about Nicky Case's teaching games.